Defining Autonomy and Challenges to the Certification of Learning-Enabled Systems in Aviation

Remotely piloted aircraft have been in operation for at least two decades. So, let’s first discuss what autonomy is in the aviation context. You have probably heard about the six levels of driving automation defined by the SAE J3016 standard from Level 0 (no driving automation) to Level 5 (full driving automation). The SAE Levels of Driving Automation [1] have been refined for clarity in May 2021 as follows:

Note that at SAE Level 5, the emphasis is on the capability of the automated driving features to “drive everywhere and in all conditions”.

Autonomy in Nature, AI, and Aviation

Embodiment is the idea that a brain exists in a body which interacts with its environment (the umwelt) and other agents who have their own goals. Autonomy is enabled by intrinsic motivation (e.g., food, water, reproduction, survival), goal-directed control of behavior, learning, memory, planning, and problem solving. The embodied and enactive accounts of language and cognition are well-established in neuroscience and the cognitive sciences.

Effective inter-agent interactions rely on a Theory of Mind (ToM) to predict the states and actions of other agents. Intelligent behavior can emerge from the interaction between agents. For example, in their drive to survive, predators and prey co-evolve through their interaction in nature.

Metacognition allows agents to monitor the accuracy and uncertainty of their perception of the environment and to assess and regulate their own reasoning and decision-making processes.

In the picture below, a Great Cormorant is transporting a branch for nest building which is useful for rest, courtship, and child-rearing. To protect the nest, the bird displays threatening behavior when approached by others.

Another example of extreme autonomy in nature is the bar-tailed godwits which fly 7,000 miles each year from Alaska to New Zealand without stopping for food or rest. They make the journey by relying on an inner “GPS” which is also a mystery. The record holder based on satellite tracking flew 8,100 miles (13,000 kilometers) in 10 days [36]. Some researchers attribute the navigation abilities of bar-tailed godwits and other migratory birds to quantum effects in their eyes allowing them to perceive Earth’s magnetic field lines. A bar-tailed godwit that was being tracked recently made a U-turn back to Alaska due to bad weather, after flying for 57 hours non-stop [37].

Birds have a highly efficient energy metabolism and fascinating learning and cognitive abilities such as spatial memory, episodic-like memory, high-acuity vision, vocal learning, and motor control enabling sophisticated flight maneuvers (hummingbirds can hover and fly forwards and backwards). Pigeons have shown the ability to detect breast cancer in radiology images after few days of training through reinforcement [54].

Energetic costs represent an evolutionary pressure not only on biological organisms [53] but also engineered AI systems which will operate at the edge of the network. A promising direction of research leading to neuromorphic computing and energy-efficient AI is Vector Symbolic Architectures (VSA). Neuromorphic vision and control have shown promise for autonomous drone flight [58]. Today, birds continue to inspire aeronautical engineers with ideas such as flapping wings, morphing wings, aerofoil properties, winglets, guidance, navigation, and control (GNC), and cognition [57].

In terms of evolutionary theory, Artificial General Intelligence (AGI) is an adaptation of current narrow AI capabilities to increasingly complex physical real-world environments like autonomous vehicles operating on the ground, in the air, at sea, or in space. The AGI research agenda is fundamentally interdisciplinary taking inspiration from neuroscience, the cognitive sciences, biology, physics, complexity science, and other disciplines.

Another term that some people prefer is “Human-Level AI” or “Human-Like AI”. However, as with AlphaGo, it is possible that AI algorithms will find solutions that are unlike anything humans have ever seen — an “alien” kind of intelligence so to speak. So, the term AGI leaves the solutions space wide open for AI algorithms and that is ok as long as we can provide formal mathematical verification of their safety.

In the context of flying an airplane safely, the essential functions of a pilot according to the US Federal Aviation Administration (FAA) are: Aviate, Navigate, and Communicate.

The American Society for Testing and Materials (ASTM) defines “autonomous” as:

“An entity that can, and has the authority to, independently determine a new course of action in the absence of a predefined plan to accomplish goals based on its knowledge and understanding of its operational environment and situation” [3].

The Joint Authorities for Rulemaking of Unmanned Systems (JARUS) Methodology for Evaluation of Automation for UAS Operations [42] published in April 2023 defines the following levels of automation:

Level 0 – Manual Operation

Level 1 – Assisted Operation

Level 2 – Task Reduction

Level 3 – Supervised Automation

Level 4 – Manage by Exception

Level 5 – Full Automation

Based on a review of SAE J3016, the ASTM’s TR1-EB Autonomy Design and Operations in Aviation: Terminology and Requirements Framework [3] defines the following modes of human involvement:

Human-In-the-Loop.

Human-On-the-Loop — human operator may take control at anytime if necessary.

Human-Off-the-Loop.

Level 5 Full Automation offers the following benefits:

Humans are kept out of harm’s way in dangerous or difficult operations such as the Suppression and Destruction of Enemy Air Defenses (SEAD/DEAD).

Ability to operate for long periods of time without consideration for human fatigue.

Low latency of control in dynamic environments where split-second response time is needed (e.g., in a dogfight).

Ability to continue operations in GPS/GNSS-denied or communications-denied environments also generally referred to as disrupted, disconnected, intermittent, and low-bandwidth (DDIL) environments. The ongoing war between Russia and Ukraine has brought increased attention to this challenge.

In military aviation, the costs of uncrewed Collaborative Combat Aircraft (CCA) can be significantly lower than for a crewed combat jet like the F-35 or F-22.

EASA Levels of Autonomy

Update 2.0 to the European Union Aviation Safety Agency (EASA) AI Roadmap [42] has refined the Level 3 Advanced Autonomy into two sublevels 3A and 3B to account for scenarios like operations in GPS/GNSS-denied or communications-denied environments.

Remotely Piloted vs. Fully Automated

The International Civil Aviation Organization (ICAO) Document 10019, Manual on Remotely Piloted Aircraft Systems (RPAS) [2] makes a distinction between autonomous aircraft and autonomous operation as follows:

“Autonomous aircraft: An unmanned aircraft that does not allow pilot intervention in the management of the flight.

Autonomous Operation: An operation during which a remotely piloted aircraft is operating without pilot intervention in the management of the flight.”

We believe the ICAO definition of autonomous aircraft is too restrictive because in practice, full autonomy does not preclude the capability for a remote operator to take control of the aircraft if necessary.

RPAS operations with aircraft like the MQ-9 Reaper rely on a crew of a pilot and sensor operator working from a ground control station. Recent innovations in Satellite Launch and Recovery (SLR) and Automatic Takeoff and Landing Capability (ATLC) will provide more operational and logistical flexibility in RPAS operations [38] enabling an Agile Combat Employment (ACE) in highly contested environments.

The Perspective of a Pilot Association

Let’s now consider the position of a pilot association. Also based upon the SAE J3016 levels of driving automation, the European Cockpit Association (ECA) — a “representative body” of over 40,000 pilots in the European Union (EU) — published a briefing paper titled Unmanned Aircraft Systems and the concepts of Automation and Autonomy [4] which distinguishes six levels of automation: Levels 0 to 5. The ECA Level 5 Full Automation is explicitly defined as follows:

“The sustained and unconditional (i.e., not ODD-specific) performance by an AFS [Automated Flight System] of the entire management of flight and management of flight fallback without any expectation that a mission commander will respond to a request to intervene.”

ODD is the Operational Design Domain or operational context (time, location, weather, type of mission, etc.). The ECA paper [4] specifies that no aviation knowledge or flying skills is required for the Mission Commander at Level 5 Full Automation. However, we believe that this is to emphasize that at Level 5, the AFS is expected to execute the mission in all operational conditions without human intervention with the exception of an ON/OFF switch (more on this later). In practice, Mission Commanders will be well trained in tactics and procedures and have basic aeronautical knowledge.

AI Safety and the OFF Switch

The OFF Switch is discussed in the AI safety literature [5,6,7,8,9] and is of particular interest in the context of Reinforcement Learning (RL) which is a popular approach in autonomous control. RL is based on maximizing a reward function and thus introduces the risk of reward hacking or reward gaming by the AI system.

It is therefore imperative to specify the reward function in a way that ensures that autonomous AI systems are not able to prevent Mission Commanders from switching them off. As Hadfield-Menell et al. explained in their paper The Off-Switch Game [5], “a rational agent will maximize expected utility and cannot achieve whatever objective it has been given if it is dead.”

Several examples of specification gaming are given in [7] and potential solutions are discussed.

From Navy Top Gun Pilot to Battlespace Commander

To support uncrewed aircraft operations like the MQ-25 Stingray, the US Navy has added a third group called Aerial Vehicle Operators to the two previously existing naval aviation groups: Pilots and Naval Flight Officers (NFOs). The introduction of uncrewed aircraft brings some changes to traditional roles and has created some concerns regarding pilot identity and group cohesion [10,11].

With the transition to multi-domain operations and Collaborative Combat Aircraft (CCA), the unifying role of Battlespace Commander or Mission Commander will certainly be a more appropriate designation. From a remote control station, a Battlespace Commander can command one or multiple aircraft or swarms across domains as needed without flying them directly.

Expecting pilots of crewed aircraft to control uncrewed CCAs could significantly increase their already high workload and reduce their cognitive performance. There are also operational benefits in a distributed approach. Referring to the concept of “loyal wingman,” John Clark, the head of Lockheed Martin’s Skunk Works remarked that uncrewed aircraft need freedom of maneuver and works best when operating detached from the crewed airplane [12,13].

The US Air Force estimates a requirement for 1,000 autonomous CCAs with a ratio of two to five CCAs per manned combat aircraft.

At the Sea-Air-Space conference in April 2023, US Navy Rear Adm. Andrew “Bucket” Loiselle, Director of the Air Warfare Division mentioned that the Navy is building a “future force that’s going to be 60 percent unmanned” [14,15]. He added that he is close to signing an agreement with the Air Force allowing the two branches to control each others’ CCAs [15]. This collaboration fits well into the US DoD concept of Joint All-Domain Command and Control or JADC2.

The UK Royal Air Force (RAF) Autonomous Collaborative Platforms (ACP) Strategy

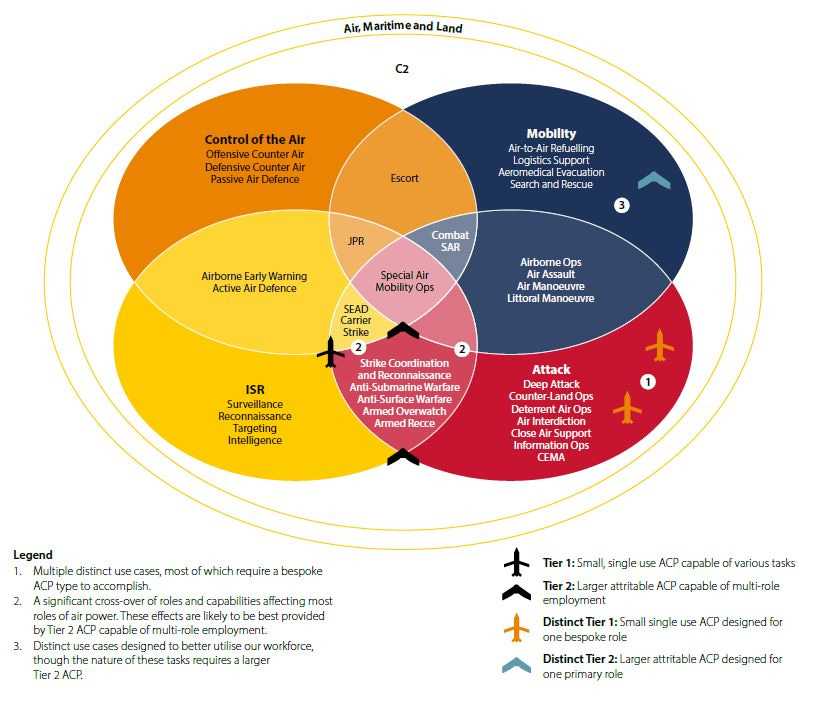

The UK MoD published a policy paper titled Royal Air Force Autonomous Collaborative Platform Strategy which states:

“By 2030 ACP are an integral part of the RAF force structure, routinely operating in partnership with crewed platform to deliver battle winning military capability across multiple domains as part of a national or coalition force” [65].

The following is a visualization of The UK Royal Air Force (RAF) Autonomous Collaborative Platforms (ACP) Strategy using the Air Power Model.

Preview of Autonomy in Action: Automated Emergency Control and Landing

The FAA has already certified Garmin’s Emergency Autoland (EA) to take control of an aircraft with the touch of a button in case of pilot incapacitation. EA is available in some aircraft like the Cirrus Vision Jet and is fully deterministic [16,17] in the sense that it does not use learning-enabled components like those built with Machine Learning and Reinforcement Learning.

Emergency control in case of pilot incapacitation will be an essential capability in Single Pilot Operations (SPOs) [18] as well. Note that the US Air Force has already been experimenting with SPOs in air refueling operations with the KC-46A Pegasus for “for certain potential high-end combat scenarios” [19].

A system called the Automatic Ground Collision Avoidance System (Auto GCAS) installed on F22, F35, and F16 fighter jets can autonomously take control of the aircraft in situations like G-induced loss of consciousness and spatial disorientation and execute avoidance maneuvers to prevent a controlled flight into terrain (CFIT). Auto GCAS is an implementation of the Run-Time Assurance (RTA) architecture (more on that later). According to the Air Force Research Lab (AFRL), “Fielded on F-16 Block 40/50 aircraft in 2014, Auto GCAS has since saved 10 aircraft and the lives of 11 pilots” [20].

Watch a declassified footage of Auto GCAS saving an unconscious F-16 Pilot:

Airbus has conducted flight tests of an AI and vision-based Autonomous Taxi, Take-Off and Landing (ATTOL) [21] system and has recently tested an automated emergency landing system called DragonFly [22] which is functionally similar to Garmin’s Emergency Autoland (EA). Like Garmin’s EA, DragonFly can take control of an aircraft in case of pilot incapacitation but is built with vision-based machine learning.

In what follows, we refer to learning-enabled systems (LES) as those built with methods like Machine Learning and Reinforcement Learning which are popular in modern AI systems for capabilities such as vision, speech, language, and autonomous vehicle control.

The big challenge currently facing the industry is how to certify LES.

Challenges to the Certification of Learning-Enabled Systems (LES)

LES using machine learning introduce new testing, verification, and certification challenges resulting from difficulties in providing formal guarantees of system behavior. Aerospace engineering practices and methods must evolve to support learning-enabled, autonomous, adaptive, high-dimensional, highly non-linear, partially observable, and non-deterministic system behavior under uncertainties.

These concerns are exacerbated by known vulnerabilities such as (this is not a complete list):

Adversarial attacks against Deep Neural Networks (DNNs) allowing an attacker to trick the model into predicting an incorrect output or an output determined by the attacker.

Model extraction and poisoning attacks.

Availability and quality of training datasets.

Out of Distribution (OOD) inputs resulting in erroneous outputs.

Lack of explainability.

Lack of causal reasoning, common-sense, and factual conceptual knowledge. Human concept learning goes beyond simple visual pattern recognition.

Tendency by Generative AI to make thinks up as in ChatGPT which is really a next-token predictor in internet-scale text corpora.

Difficulties in quantifying uncertainty and lack of model calibration.

Reward Hacking or Specification Gaming in Reinforcement Learning.

Adversarial Prompting or Prompt Injection Attacks — new attack vectors introduced by Large Language Models (LLMs).

The growing list of companies that have banned or restricted access to ChatGPT for their employees at work includes [56]:

Northrop Grumman

Apple

JPMorgan Chase

Verizon

Citigroup

Samsung

Goldman Sachs

Wells Fargo

Bank of America

Deutsche Bank

Amazon.

You may have heard about the “Turing Test” in AI. Alan Turing was a genius who made significant contributions to science, but his work should be put in the proper context. In safety critical domains like commercial aviation, the objective of testing an AI-enabled autonomous aircraft is certainly not to fool other pilots in the airspace into believing that a human pilot is in command. We believe it is time to move on altogether from the “Turing Test” in AI.

Various methods of testing, verification, and defenses for AI safety are discussed in [32, 33, 34]. In what follows, we review regulations and methods relevant to the aviation domain.

AI Safety in the Air: Early Experiences with ACAS Xu

ACAS Xu is an automated aircraft collision avoidance system built with neural networks. In ACAS Xu, the decision logic for air collision avoidance is formulated as a Markov decision process (MDP) using dynamic programming and a deep neural network function approximator.

In Neural Network Compression of ACAS Xu Early Prototype is Unsafe: Closed-Loop Verification through Quantized State Backreachability [23], Bak and Tran show how formal methods can be used to identify unsafe counterexamples for ACAS Xu.

In Hijacking an autonomous delivery drone equipped with the ACAS-Xu system [24], Gauffriau et al. show how an aircraft equipped with ACAS Xu can be hijacked using Reinforcement Learning methods to force the landing at an alternate location determined by the attacker.

Evolving Engineering for Learning (EEL) toward Safe AI

At Atlantic AI Labs, we’ve developed a framework called Evolving Engineering for Learning (EEL) toward Safe AI to tackle these challenges. EEL is largely inspired by the aviation concept of “airworthiness” which has made air travel the safest mode of transportation by far.

Regulatory Framework for the Certification of Autonomous Driving Systems

Although the physics of autonomous flying and driving differ in many ways, the two domains will still share the same foundational technologies such as Deep Neural Networks (DNNs), Deep Reinforcement Learning (DRL), and Model Predictive Control (MPC) for functions such as perception, planning, and control. The autonomous driving industry is embracing an end-to-end approach (as opposed to the traditional modular pipelines) for perception, motion planning, and control enabling a joint and global optimization of theses functions.

On November 13, 2023, Cruise, General Motors’ self-driving unit announced that it will pause all public road operations nationwide as a result of safety incidents and the revocation of its permit by regulators in the State of California. GM’s third-quarter earnings report indicates that the company had lost about $1.9 billion on Cruise by September of 2023 including $700 million spent during the third quarter of 2023. Cruise says it is shutting down operations to “rebuild trust” and on November 19, 2023, the company announced the resignation of its CEO. According to its own financial statements, GM has lost $8.2 billion on Cruise since 2017.

Ford and Volkswagen shut down their joint self-driving unit Argo AI back in 2022 after investing $1 billion and $2.6 billion respectively. Before the shutdown, Argo AI reached $12.4 billion in valuation. Ford posted “a $2.7 billion non-cash, pretax impairment on its investment in Argo AI, resulting in an $827 million net loss for Q3 [of 2022].”

In March 2024, Apple shut down its autonomous vehicle project called Project Titan after spending over $10 billion.

The autonomous driving industry is further along in the path to regulation, technology maturity, and certification. Valuable lessons can already be learned from that experience. Current regulations provide guidance on simulation-based scenario-testing including the assessment of simulation credibility.

An example is the European Union (EU) Regulation 2022/1426 of 5 August 2022 which has the goal of “laying down rules for the application of Regulation (EU) 2019/2144 of the European Parliament and of the Council as regards uniform procedures and technical specifications for the type-approval of the automated driving system (ADS) of fully automated vehicles.”

Other relevant guidelines by the United Nations Economic Commission for Europe (UNECE) regarding the verification and validation of autonomous driving systems include:

ECE/TRANS/WP.29/2022/58: New Assessment/Test Method for Automated Driving (NATM) Guidelines for Validating Automated Driving System (ADS).

ECE/TRANS/WP.29-187-10/2022: Guidelines and Recommendations concerning Safety Requirements for Automated Driving Systems.

In the NATM, Validation Methods for Automated Driving (VMAD) are based on a multi-pillar approach composed of a scenarios catalogue and five validation methodologies (pillars):

Scenarios catalogue

Simulation/virtual testing,

Track testing

Real world testing

Audit/assessment

In-service monitoring and reporting.

The following diagram illustrates the In-Service Monitoring and Reporting pillar (ISMR) integration within the multi-pillar framework in the NATM.

The predictive capability and credibility of simulation models for the certification of autonomous driving systems have been addressed as part of the SET level project. The following diagram shows how the Credible Simulation Process (CSP) is embedded in high-level processes.

The details of CSP are represented in the following diagram.

Regulatory Framework for the Certification of Learning-Enabled Systems in Civil Aviation

The following standards are traditionally used for certification in civil aviation:

ARP4761: Guidelines and Methods for Conducting the Safety Assessment Process on Civil Airborne Systems and Equipment.

ARP4754A: Guidelines for Development of Civil Aircraft and Systems.

DO-178C: Software Considerations in Airborne Systems and Equipment Certification.

DO-254: Design Assurance Guidance for Airborne Electronic Hardware.

A functional hazard assessment (FHA) is conducted to identify hazardous failure conditions. Acceptable failure probabilities are assigned to hardware components and design assurance levels (DAL) are assigned to software components depending on their criticality, hazard classification (catastrophic, hazardous, major, minor, no effect), and failure probability.

Also worth mentioning are the following FAA regulations:

14 CFR Part 23. (2020, September 22). Airworthiness standards: Normal category airplanes. Kansas City, MO: FAA.

14 CFR Part 25. (2021, June 10). Airworthiness standards: Transport category airplanes. FAA.

The EUROCAE WG-114/SAE G-34 joint working group is focused on the certification of AI technologies for the safe operation of aerospace systems and vehicles. Its membership includes: Boeing, Airbus, ATR, Embraer, Textron, Gulfstream, Dassault, Mitsubishi, Lockheed, Northrop Grumman, NASA, EDA, Honeywell, Collins, Thales, GE, P&W, RR, Safran, Raytheon, BAE, and others. The following are its deliverables:

6988 / ER-022: Artificial Intelligence in Aeronautical Systems: Statement of Concerns (Published on 30 April 2021).

AS6983: Process Standard for Development and Certification / Approval of Aeronautical Safety-Related Products Implementing AI.

AIR6987: Artificial Intelligence in Aeronautical Systems: Taxonomy.

AIR6994: Artificial Intelligence in Aeronautical Systems: Use Cases Considerations.

The European Union Aviation Safety Agency (EASA) has published the following guidance documents related to AI:

EASA Artificial Intelligence Roadmap 2.0.

EASA Concept Paper: guidance for Level 1 & 2 machine learning applications — proposed Issue 2.

EASA Formal Methods use for Learning Assurance (ForMuLA).

New developments in DevSecOps and MLOps will certainly play a role in the learning assurance process.

At the time of writing, we are not aware of any similar guidance by the US Federal Aviation Administration (FAA). The Research, Engineering and Development Advisory Committee (REDAC) Guidance on the FY 2025 Research and Development Portfolio dated October 22, 2022 states:

“Recommendation: -Given the speed at which demands for AI/ML technologies are being developed, the REDAC Subcommittee on Aircraft Safety reiterates its previous recommendation for the FAA to expeditiously prepare and published a detailed phased roadmap for AI/ML research and development required to formulate AI/ML regulatory guidance, taking into account the FAA safety continuum and use case to accelerate deployment for lower risk aviation applications [60].”

AI introduces new challenges in terms of human factors, explainability, testing, verification, assurance, and security. We hope that these emerging standards will also address these important topics based on the latest scientific evidence.

Regulatory Framework for the Certification of Learning-Enabled Systems in Military Aviation

The following standards are traditionally used for certification in military aviation:

MIL-HDBK-516C: Department of Defense Handbook, Airworthiness Certification Criteria, 2014.

NAVAIR Instruction 13034.1F: Airworthiness and Cybersecurity Safety Policies for Air Vehicles and Aircraft Systems, 2016.

NAVAIR Airworthiness and Cybersafe Process Manual, NAVAIR Manual M-13034.1, 2016.

Air Force Instruction 62–601: USAF Airworthiness, 2010.

Air Force Policy Directive 62–6: USAF Airworthiness, 2019.

Army Regulation 70–62: Airworthiness of Aircraft Systems, 2016.

MIL-STD-882E: DoD Standard Practice: System Safety, 2012.

Joint Software Systems Safety Engineering Handbook (JSSSEH), 2010.

MIL-STD-882E and the JSSSEH assign Level of Rigor (LOR) tasks to software components based on the concepts of software criticality index (SWCI) and Software Control Category depending on hazard classification and failure probability.

The following standards are used for testing flying qualities:

MIL-STD-1797B, Flying Qualities of Piloted Aircraft, 2006.

ADS-33E-PRF. (2000). Aeronautical design standard performance specification handling qualities requirements for military rotorcraft. Redstone Arsenal, Alabama: United States Army Aviation And Missile Command Aviation.

Mitchell, D.G., Klyde, D.H., Pitoniak, S., Schulze, P.C., Manriquez, J.A., Hoffler, K.D. and Jackson, E.B., "NASA's Flying Qualities Research Contributions to MIL-STD-1797C," NASA CR-2020-5002350, 2020

On-going standardization and research activities will extend these existing standards to address the challenges of certifying LES. The United States Department of Defense (DoD) formed the National Airworthiness Council Artificial Intelligence Working Group (NACAIWG) in 2021.

Assurance Methods for AI Safety

Currently, DO-178C, Software Considerations in Airborne Systems and Equipment Certification provides guidance on acceptable methods in the following supplements:

DO-331: Model-Based Development and Verification.

DO-332: Object-Oriented Technology and Related Techniques.

DO-333: Formal Methods.

Classical assurance and verification methods are not suitable for DNNs. Formal methods are particularly relevant for the verification of learning-enabled components (LEC) using techniques like Machine Learning and Reinforcement Learning.

Assurance Cases: Arguing for Certification and Safety

Traditional aviation regulation standards like ARP4761, ARP4754A, and DO-178C are very prescriptive in nature. They have been very effective in the certification and safety of deterministic systems. LES present novel challenges that may be better handled through a safety argumentation approach. Assurance Cases are a methodology created by John Rushby who defined it as follows [59]:

“Assurance cases are a method for providing assurance for a system by giving an argument to justify a claim about the system, based on evidence about its design, development, and tested behavior.”

Assurance Cases provide a framework for an adaptive and agile approach to safety assurance.

Formal Methods and Reachability Analysis

The goal of formal methods [43] is to provide mathematical proof of system behavior based on the specification of its safety properties (typically expressed in Temporal Logic) and probabilistic model checking necessary to verify non-deterministic behavior under stochastic uncertainties.

Reachability analysis consists in computing a set of reachable states of the system over a finite time horizon starting from a set of initial inputs and a model of the system. As mentioned previously, reachability analysis has been used to identify unsafe counterexamples for ACAS Xu, an automated aircraft collision avoidance system built with neural networks [23].

Safety Filtering and Runtime Assurance (RTA)

In an RTA architecture, when a runtime Safety Monitor detects a fault in the Primary Controller, control is switched to a Backup Controller. For example, the Primary Controller can be a human pilot who is incapacitated as a result of G-induced loss of consciousness (this is the case with Auto-GCAS) or a DNN-based controller for which it is difficult to provide formal safety guarantees through verification.

ASTM’s F3269-17 Standard Practice For Methods To Safely Bound Flight Behavior Of Unmanned Aircraft Systems Containing Complex Functions defines an RTA architecture known as the Simplex Architecture in which the Backup Controller is activated through a switch based on a built-in Decision Logic unit.

Advanced RTA methods include Safety Filtering based on the concept of set invariance. Safety Filtering includes techniques such as Hamilton-Jacobi reachability, control barrier functions (CBF), and data-driven predictive control methods to smoothly ensure that the system always operates within a safe invariant subset of the system state space [40].

Fault Detection, Isolation, and Recovery (FDIR)

Modern aircraft rely on various data-driven mechanisms for fault detection and recovery based on system redundancy. These mechanisms include checking that sensor measurement values remain within certain lower and upper bounds of the flight envelope; cross-checks and consistency checks; voting schemes; and various other built-in tests [39].

In general, FDIR can be classified as data-driven, model-based, or a mix of the two. Advanced model-based approaches include nonlinear Kalman filtering, set membership, observer, LPV, and sliding mode designs [46].

Certification by Analysis (CbA) aka Certification by Simulation

CbA is currently being explored for both fixed-wing [48] and rotorcraft [49] certification. In our view, the purpose of CbA is to make physical flight testing safer and more effective and comprehensive, particularly in the border regions of the flight envelope. As Robert Gregg, Chief Aerodynamicist at the Boeing Company nicely puts it at the ICAS 2022 conference, the goal of CbA is:

“To accurately predict the flight characteristics and validate these characteristics in flight – ‘right at the first flight’ and ‘right for the right reason’” [55].

The following diagram depicts the overall structure of the Certification by Simulation Process in the Preliminary Guidelines for the Rotorcraft Certification by Simulation Process Update No 1, March 2023 [49].

Digital Twins enabled by Modeling and Simulation (M&S) will play a key role throughout the product life cycle: design, manufacturing, testing, certification, training, maintenance, and operations. Reduced Order Modeling (ROM) using machine learning is improving the fidelity of modeling and simulation (M&S).

However, Digital Twins are not a substitute to operational testing and evaluation (OT&E) in the real world physical environment. In the aviation domain, physical flight testing is necessary for regulatory certification to account for the inherent limitations of M&S such as coupled physics (e.g., highly non-linear dynamics during stall at high angle of attack), approximations and errors in numerical computations, unpredictable operational scenarios, and various other uncertainties.

Scenario-Based Testing

Scenario-Based Testing (SBT) has been explored in the context of autonomous driving and is now mandated by the new EU regulation 2022/1426 for type approvals of motor vehicles with a fully automated driving function.

A survey of scenario generation methods for testing self-driving cars is provided by [47]. Similar techniques can be adapted to the aviation domain while leveraging existing databases of previous aviation accident investigation reports to generate realistic scenarios.

Dynamic Fault and Attack Injection

Injection-based methods consist in testing system behavior for safety by deliberately introducing input parameters and operational events corresponding to potential adversarial attacks (including cybersecurity and privacy attacks) or software and hardware faults or errors [41].

Fault and attack injections can be model-based, simulation-based, or directly applied to the actual cyber-physical AI system in operational testing. Fault injections are designed to test system behavior under artificially generated faults including sensor, software, interface, and hardware (e.g., actuator) faults. Examples of flight control systems faults include: angle of attack (AOA) sensor fault, oscillatory failure case (OFC), runaway, and jamming.

Dynamic Fault and Attack injection methods can rely on simulation using a digital twin of the system.

Uncertainty Quantification

Operational Testing and Evaluation (OT&E) and Training, Tactics, Techniques, and Procedures (TTPs) will need to evolve as well to support AI Safety. For example, the outputs of AI algorithms are probabilistic and non-deterministic in nature and the ability to quantify and understand uncertainties will be essential for safety-critical operations, particularly when human life is at stake. Out-of-distribution (OOD) inputs are a particular concern and require proper handling during operations.

Conformal Prediction and Conformal Risk Control [35] are methods that can produce uncertainty sets or intervals of the model’s predictions with guarantees based on a probability that can be specified by the user.

As mentioned previously, metacognition allows humans to monitor the accuracy and uncertainty of their perception of the environment. A similar mechanism will be needed in autonomous AI systems.

System operators should be trained in understanding and evaluating AI-related uncertainties and proper operating procedures when interacting with AI systems.

The increasing use of modeling and simulation (M&S) and digital twins for scenario-based testing of autonomous systems will also raise the issue of simulation predictive capability and credibility assessment that will necessitate uncertainty quantification [50].

Explainability

When an accident occurs, the public wants to know what happened and why. Detailed accident investigation reports and recommendations are disseminated, reviewed, and implemented by aviation professionals. This has contributed to significant improvements in aviation safety.

Airplanes are required by regulation to have a flight data recorder and cockpit voice recorder that should be made available to regulators in the event of an accident or occurrence requiring notification. Data retrieved from the records are used to assist in determining the cause of accidents. This can also enable the complete simulated reconstruction of an accident as illustrated in the following USAF accident investigation report of a mishap involving an F-35 at Hill Air Force Based on October 19, 2022.

The explainability of AI systems behavior is therefore a sine qua non in flight operations. Popular ML explainabiltiy methods include LIME, SHAP, and Meaningful Perturbations. Covert et al. [31] proposed a unified framework for model explanation called removal-based explanations which consists in simulating the removal of features and analyzing their influence on the model’s behavior.

In [64], Amoukou describes the limitations of LIME and SHAP and propose an alternative approach called Regional Explanations.

Cybersecurity and Privacy

LES in autonomous air vehicles are vulnerable to cyber attacks like any other node in a networked environment [51]. The DARPA HACMS program (High Assurance Cyber Military Systems) was initiated to develop cybersecurity solutions for network-enabled embedded systems.

The Secure Mathematically-Assured Composition of Control Models (SMACCM) [52] project in the DARPA HACMS program used formal methods to develop provably secure defenses against different types of cyber attacks. These defenses have been deployed on a SMACCMcopter research vehicle and the Boeing Unmanned Little Bird helicopter. Security assessment and penetration testing by a Red Team were performed as part of the project.

Communicating with automatic speech recognition (ASR)

The quality of ASR has been significantly improved recently with the use of Deep Neural Networks. ASR has the potential of playing a role in the control of UAVs.

A safety assessment has been conducted as part of the EUSESAR2020 project PJ.16-04 “CWP HMI” (Controller Working Position Human Machine Interface) on the use of ASR for the automatic recognition of aircraft callsigns and ATC commands and other voice communications between pilots and controllers [61].

Human Factors: The Neuroergonomics of AI-Human Teaming

The neuroergonomics of AI-human interaction is currently understudied. There are some reports in the press of people anthropomorphizing AI systems or believing in the sentience of AI chatbots after interacting with them, sometimes with dramatic consequences. There were several aviation accidents related to human factors during the introduction of the glass cockpit in the 1980s and 1990s.

Neuroergonomics can provide a better understanding of the neural and physiological correlates of effective human-to-AI vs. human-to-human teaming. As Paul Eden correctly points out in an article in the British Royal Aeronautical Society [45] web site regarding autonomous collaborative combat aircraft (CCAs):

“Pilots simply won’t have the capacity to be involved in the detailed operation of those assets, so they’ll need to interact in a more goal orientated manner, supplying tasks and providing oversight as a flight lead might.”

In a hyperscanning experiment, Dehais et al. [25] made neurophysiologic measurements using ECG and EEG while human pilots and ground controllers were interacting with each other and with AI systems performing the same roles in a close air support scenario. The authors noted that humans can cooperate effectively with AI systems whether or not the humans were aware that they were sometimes interacting with AI systems.

The experiment revealed that human pilots and ground controllers can be fooled by AI systems. However, as mentioned previously, we believe that the Turing Test (originally called the Imitation Game by Turing) has no role in aviation because it says nothing about the safety and reliability of AI systems.

The EEG analysis in [25] revealed a higher level of neurophysiologic synchrony during human-to-human interactions. Previous studies have revealed a high level of neural synchrony between human operators collaborating during periods of high cognitive workload [26, 27, 28, 29].

Testing the Flying Qualities and Handling Qualities of Autonomous Aircraft

MILSTD-1797B and ADS-33E-PRF are used for testing flying qualities of piloted fixed wing aircraft and rotorcraft respectively. It was recognized earlier that these standards must evolve [62] to support the unique requirements of autonomous aircraft.

NASA released a report titled Defining handling qualities of unmanned aerial systems: Phase II final report [63]. The following diagram from the report depicts the proposed UAS Handling Qualities Assessment Process.

Learning from Captain “Sully”: Toward Cognitive Control

Can autonomous technologies ever provide the level of safety currently made possible by the presence of a competent human pilot in the cockpit?

In a previous post titled Introducing Evolving Engineering for Learning (EEL) toward Safe AI, I discuss why pure control approaches to autonomy that rely on techniques like Deep Reinforcement Learning (DRL) and Model Predictive Control (MPC) are insufficient for achieving Level 5 autonomy and must be augmented and integrated within a Cognitive Architecture — an approach that we call Cognitive Control, a concept borrowed from neuroscience and the cognitive sciences.

References

[1] SAE Levels of Driving Automation™ Refined for Clarity and International Audience https://www.sae.org/blog/sae-j3016-update

[2] ICAO Document 10019, Manual on Remotely Piloted Aircraft Systems (RPAS) https://store.icao.int/en/manual-on-remotely-piloted-aircraft-systems-rpas-doc-10019

[3] ASTM TR1-EB Autonomy Design and Operations in Aviation: Terminology and Requirements Framework

[4] European Cockpit Association (ECA), Unmanned Aircraft Systems and the concepts of Automation and Autonomy, https://www.eurocockpit.be/positions-publications/unmanned-aircraft-systems-and-concepts-automation-and-autonomy

[5] Hadfield-Menell, Dylan, Anca Dragan, Pieter Abbeel, and Stuart Russell. "The off-switch game." arXiv preprint arXiv:1611.08219 (2016). https://arxiv.org/abs/1611.08219

[6] Wängberg, Tobias, Mikael Böörs, Elliot Catt, Tom Everitt, and Marcus Hutter. "A game-theoretic analysis of the off-switch game." In Artificial General Intelligence: 10th International Conference, AGI 2017, Melbourne, VIC, Australia, August 15-18, 2017, Proceedings 10, pp. 167-177. Springer International Publishing, 2017. https://link.springer.com/chapter/10.1007/978-3-319-63703-7_16

[7] Specification gaming: the flip side of AI ingenuity https://www.deepmind.com/blog/specification-gaming-the-flip-side-of-ai-ingenuity

[8] Everitt, Tom, Gary Lea, and Marcus Hutter. "AGI safety literature review." arXiv preprint arXiv:1805.01109 (2018). https://arxiv.org/abs/1805.01109

[9] Krakovna, Victoria, and Janos Kramar. "Power-seeking can be probable and predictive for trained agents." arXiv preprint arXiv:2304.06528 (2023). https://arxiv.org/abs/2304.06528

[10] Naval Flight Officers’ Unmanned Future https://www.usni.org/magazines/proceedings/2021/september/naval-flight-officers-unmanned-future

[11] Unmanned Future Threatens Pilot Identity https://www.usni.org/magazines/proceedings/2022/september/unmanned-future-threatens-pilot-identity-0

[12] Lockheed Martin’s Skunk Works Sees Value in MUM-T, Autonomous Aircraft https://www.airandspaceforces.com/lockheed-martins-skunk-works-sees-value-in-mum-t-autonomous-aircraft/

[13] Vision For Future Manned-Unmanned Air Combat Laid Out By Skunk Works https://www.thedrive.com/the-war-zone/vision-for-future-manned-unmanned-air-combat-laid-out-by-skunk-works

[14] Navy Carrier-Based Drones Will Be Able To Be Controlled By The Air Force https://www.thedrive.com/the-war-zone/navy-carrier-based-drones-will-be-able-to-be-controlled-by-the-air-force

[15] Navy seeking ability and permission to take control of Air Force’s CCA drones — and reciprocate https://defensescoop.com/2023/04/07/navy-seeking-ability-and-permission-to-take-control-of-air-forces-cca-drones-and-reciprocate/

[16] "Emergency Autoland puts Garmin on the bleeding edge of autonomous flying" at the Air Current https://theaircurrent.com/technology/emergency-autoland-puts-garmin-on-the-bleeding-edge-of-autonomous-flying/

[17] FAA Certifies Cirrus Vision Jet’s Safe Return Becoming the First Jet Aircraft to be Certified with Garmin Autoland https://cirrusaircraft.com/story/faa-certifies-cirrus-vision-jets-safe-return-becoming-the-first-jet-aircraft-to-be-certified-with-garmin-autoland/

[18] Flying solo: Technology takes aim at co-pilots https://www.politico.eu/article/flying-solo-technology-takes-aim-at-co-pilots/

[19] McConnell completes KC-46 flight with limited crew https://www.amc.af.mil/News/Article-Display/Article/3203884/mcconnell-completes-kc-46-flight-with-limited-crew/

[20] Congressional report commends AFRL for life-saving collision avoidance technology; integrated air and ground system remains near transition https://www.afrl.af.mil/News/Article/2678754/congressional-report-commends-afrl-for-life-saving-collision-avoidance-technolo/

[21] Airbus concludes ATTOL with fully autonomous flight tests https://www.airbus.com/en/newsroom/press-releases/2020-06-airbus-concludes-attol-with-fully-autonomous-flight-tests

[22] Could the humble dragonfly help pilots during flight? https://www.airbus.com/en/newsroom/stories/2023-01-could-the-humble-dragonfly-help-pilots-during-flight

[23] S. Bak and H.-D. Tran, "Neural network compression of ACAS Xu early prototype is unsafe: Closed-loop verification through quantized state backreachability," in NASA Formal Methods, 2022, pp. 280–298. https://arxiv.org/abs/2201.06626

[24] Gauffriau, Adrien, David Bertoin, and Jayant Sen Gupta. "Hijacking an autonomous delivery drone equipped with the ACAS-Xu system." In ERTS2022. 2022. https://hal.science/hal-03693913/

[25] Dehais, Frédéric and Vergotte, Grégoire and Drougard, Nicolas and Ferraro, Giuseppe and Somon, Bertille and Ponzoni Carvalho Chanel, Caroline and Roy, Raphaëlle N. AI can fool us humans, but not at the psycho-physiological level: a hyperscanning and physiological synchrony study. (2021) In: IEEE International Conference on Systems, Man, and Cybernetics (IEEE SMC 2021), 17 October 2021 - 20 October 2021 (Virtual event, Australia). https://hal.science/hal-03384963/document

[26] N. Sciaraffa, G. Borghini, P. Arico, G. Di Flumeri, A. Colosimo, A. Bezerianos, N. V. Thakor, and F. Babiloni, “Brain interaction during cooperation: Evaluating local properties of multiple-brain network,” Brain sciences, vol. 7, no. 7, p. 90, 2017.

[27] K. J. Verdiere, F. Dehais, and R. N. Roy, “Spectral EEG-based classification for operator dyads’ workload and cooperation level estimation,” in IEEE Int Conf Syst Man Cybern, 2019, pp. 3919–3924.

[28] R. N. Roy, K. J. Verdiere, and F. Dehais, “EEG covariance-based estimation of cooperative states in teammates,” in Int Conf HumanComputer Interaction. Springer, 2020, pp. 383–393.

[29] J. Toppi, G. Borghini, M. Petti, E. J. He, V. De Giusti, B. He, L. Astolfi, and F. Babiloni, “Investigating cooperative behavior in ecological settings: an eeg hyperscanning study,” PloS one, vol. 11, no. 4, p. e0154236, 2016.

[30] Lundberg, S. M., and Lee, S.-I., “A Unified Approach to Interpreting Model Predictions,” Proceedings of the 31st International Conference on Neural Information Processing Systems, Curran Associates Inc., Red Hook, NY, USA, 2017, p. 4768–4777. https://arxiv.org/abs/1705.07874

[31] Covert, Ian C., Scott Lundberg, and Su-In Lee. "Explaining by removing: A unified framework for model explanation." The Journal of Machine Learning Research 22, no. 1 (2021): 9477-9566. https://arxiv.org/abs/2011.14878

[32] Xiang, Chong, Saeed Mahloujifar, and Prateek Mittal. "{PatchCleanser}: Certifiably Robust Defense against Adversarial Patches for Any Image Classifier." In 31st USENIX Security Symposium (USENIX Security 22), pp. 2065-2082. 2022. https://www.usenix.org/conference/usenixsecurity22/presentation/xiang

[33] Dai, Sihui, Saeed Mahloujifar, Chong Xiang, Vikash Sehwag, Pin-Yu Chen, and Prateek Mittal. "MultiRobustBench: Benchmarking Robustness Against Multiple Attacks." arXiv preprint arXiv:2302.10980 (2023). https://arxiv.org/abs/2302.10980

[34] Huang, Xiaowei, Daniel Kroening, Wenjie Ruan, James Sharp, Youcheng Sun, Emese Thamo, Min Wu, and Xinping Yi. "A survey of safety and trustworthiness of deep neural networks." arXiv preprint arXiv:1812.08342 (2018). https://arxiv.org/pdf/1812.08342.pdf

[35] Angelopoulos, Anastasios N., Stephen Bates, Adam Fisch, Lihua Lei, and Tal Schuster. "Conformal risk control." arXiv preprint arXiv:2208.02814 (2022). https://arxiv.org/abs/2208.02814

[36] These Mighty Shorebirds Keep Breaking Flight Records—And You Can Follow Along https://www.audubon.org/news/these-mighty-shorebirds-keep-breaking-flight-records-and-you-can-follow-along

[37] Godwit (birds) migration tracked (Global) - BBC News - 14th November 2021

[38] SLR: creating new legacy https://www.creech.af.mil/News/Article-Display/Article/3375370/slr-creating-new-legacy/

[39] Goupil, Philippe, and Ali Zolghadri. "The challenge of advanced FDI algorithms for aircraft systems." IFAC-PapersOnLine 55, no. 6 (2022): 591-596.

[40] K. L. Hobbs, M. L. Mote, M. C. L. Abate, S. D. Coogan and E. M. Feron, "Runtime Assurance for Safety-Critical Systems: An Introduction to Safety Filtering Approaches for Complex Control Systems," in IEEE Control Systems Magazine, vol. 43, no. 2, pp. 28-65, April 2023, doi: 10.1109/MCS.2023.3234380.

[41] Agarwal, Udit Kumar, Abraham Chan, and Karthik Pattabiraman. "LLTFI: Framework Agnostic Fault Injection for Machine Learning Applications (Tools and Artifact Track)." In 2022 IEEE 33rd International Symposium on Software Reliability Engineering (ISSRE), pp. 286-296. IEEE, 2022.

[42] Joint Authorities for Rulemaking of Unmanned Systems (JARUS) Methodology for Evaluation of Automation for UAS Operations http://jarus-rpas.org/content/new-publication-jarus-methodology

[43] Formal Methods use for Learning Assurance (ForMuLA) https://www.easa.europa.eu/en/document-library/general-publications/easa-collins-aerospace-ipc-project-formula-formal-methods-use

[44] EASA Artificial Intelligence Roadmap 2.0 https://www.easa.europa.eu/en/document-library/general-publications/easa-artificial-intelligence-roadmap-20

[45] Paul E. Eden, "Future cockpits - flying with the blink of an eye" https://www.aerosociety.com/news/future-cockpits-flying-with-the-blink-of-an-eye/

[46] Zolghadri, Ali. "The challenge of advanced model-based FDIR for real-world flight-critical applications." Engineering Applications of Artificial Intelligence 68 (2018): 249-259.

[47] Schütt, Barbara, Joshua Ransiek, Thilo Braun, and Eric Sax. "1001 Ways of Scenario Generation for Testing of Self-driving Cars: A Survey." arXiv preprint arXiv:2304.10850 (2023).

[48] Mauery, Timothy, Juan Alonso, Andrew Cary, Vincent Lee, Robert Malecki, Dimitri Mavriplis, Gorazd Medic, John Schaefer, and Jeffrey Slotnick. A guide for aircraft certification by analysis. No. NASA/CR-20210015404. 2021.

[49] Padfield, Gareth D., Linghai Lu, Philip Podzus, Mark White, and Giuseppe Quaranta. "Preliminary guidelines for the rotorcraft certification by simulation process: update no. 1, March 2023."

[50] Thelen, Adam, Xiaoge Zhang, Olga Fink, Yan Lu, Sayan Ghosh, Byeng D. Youn, Michael D. Todd, Sankaran Mahadevan, Chao Hu, and Zhen Hu. "A comprehensive review of digital twin—part 2: roles of uncertainty quantification and optimization, a battery digital twin, and perspectives." Structural and multidisciplinary optimization 66, no. 1 (2023): 1.

[51]Wang, Xianghua, Ali Zolghadri, and Changqing Wang. "Covert Attack Detection and Secure Control for Cyber Physical Systems." (2023).

[52] Cofer, Darren, Andrew Gacek, John Backes, Michael W. Whalen, Lee Pike, Adam Foltzer, Michal Podhradsky et al. "A formal approach to constructing secure air vehicle software." Computer 51, no. 11 (2018): 14-23.

[53] Niven, Jeremy E., and Simon B. Laughlin. "Energy limitation as a selective pressure on the evolution of sensory systems." Journal of Experimental Biology 211, no. 11 (2008): 1792-1804.

[54] Levenson, Richard M., Elizabeth A. Krupinski, Victor M. Navarro, and Edward A. Wasserman. "Pigeons (Columba livia) as trainable observers of pathology and radiology breast cancer images." PloS one 10, no. 11 (2015): e0141357.

[55] Towards certification by analysis for flight characteristics general lecture - YouTube

[56] Aaron Mok, "Amazon, Apple, and 12 other major companies that have restricted employees from using ChatGPT." Business Insider, Jul 11, 2023. https://www.businessinsider.com/chatgpt-companies-issued-bans-restrictions-openai-ai-amazon-apple-2023-7

[57] Harvey, Christina, Guido de Croon, Graham K. Taylor, and Richard J. Bomphrey. "Lessons from natural flight for aviation: then, now and tomorrow." Journal of experimental biology 226, no. Suppl_1 (2023): jeb245409.

[58] Paredes-Vallés, Federico, Jesse Hagenaars, Julien Dupeyroux, Stein Stroobants, Yingfu Xu, and Guido de Croon. "Fully neuromorphic vision and control for autonomous drone flight." arXiv preprint arXiv:2303.08778 (2023).

[59] Rushby, John. "The interpretation and evaluation of assurance cases." Comp. Science Laboratory, SRI International, Tech. Rep. SRI-CSL-15-01 (2015).

[60] Research, Engineering and Development Advisory Committee (REDAC) Guidance on the FY 2025 Research and Development Portfolio. October 22, 2022. https://www.faa.gov/redac/REDAC-Guidance-for-FY2025-October212022

[61] Pinska-Chauvin, Ella, Hartmut Helmke, Jelena Dokic, Petri Hartikainen, Oliver Ohneiser, and Raquel García Lasheras. "Ensuring Safety for Artificial-Intelligence-Based Automatic Speech Recognition in Air Traffic Control Environment." Aerospace 10, no. 11 (2023): 941.

[62] Bingaman, J. "Unmanned aircraft and the applicability of military flying qualities standards." In AIAA Atmospheric Flight Mechanics Conference, p. 4415. 2012.

[63] Klyde, David H., Chase P. Schulze, Justin P. Miller, Jose A. Manriquez, Aditya Kotikalpudi, David G. Mitchell, Peter J. Seiler et al. Defining handling qualities of unmanned aerial systems: Phase II final report. No. NASA/CR-2020-220564. 2020.

[64] Ibrahim Amoukou, Salim. "Trustworthy machine learning: explainability and distribution-free uncertainty quantification." PhD diss., université Paris-Saclay, 2023.

[65] Royal Air Force Autonomous Collaborative Platform Strategy. Ministry of Defence. Published 27 March 2024. https://www.gov.uk/government/publications/royal-air-force-autonomous-collaborative-platform-strategy